Overview.

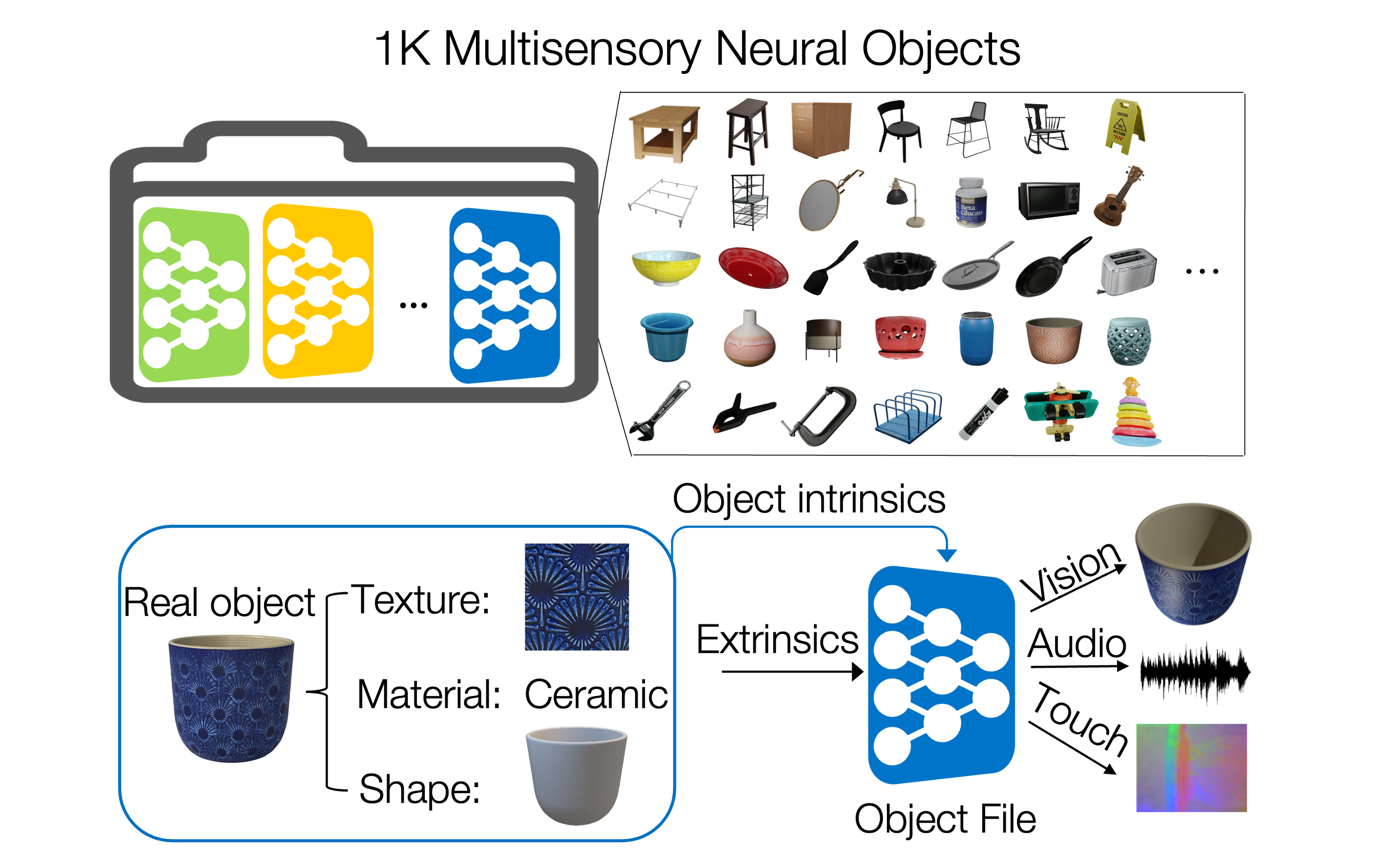

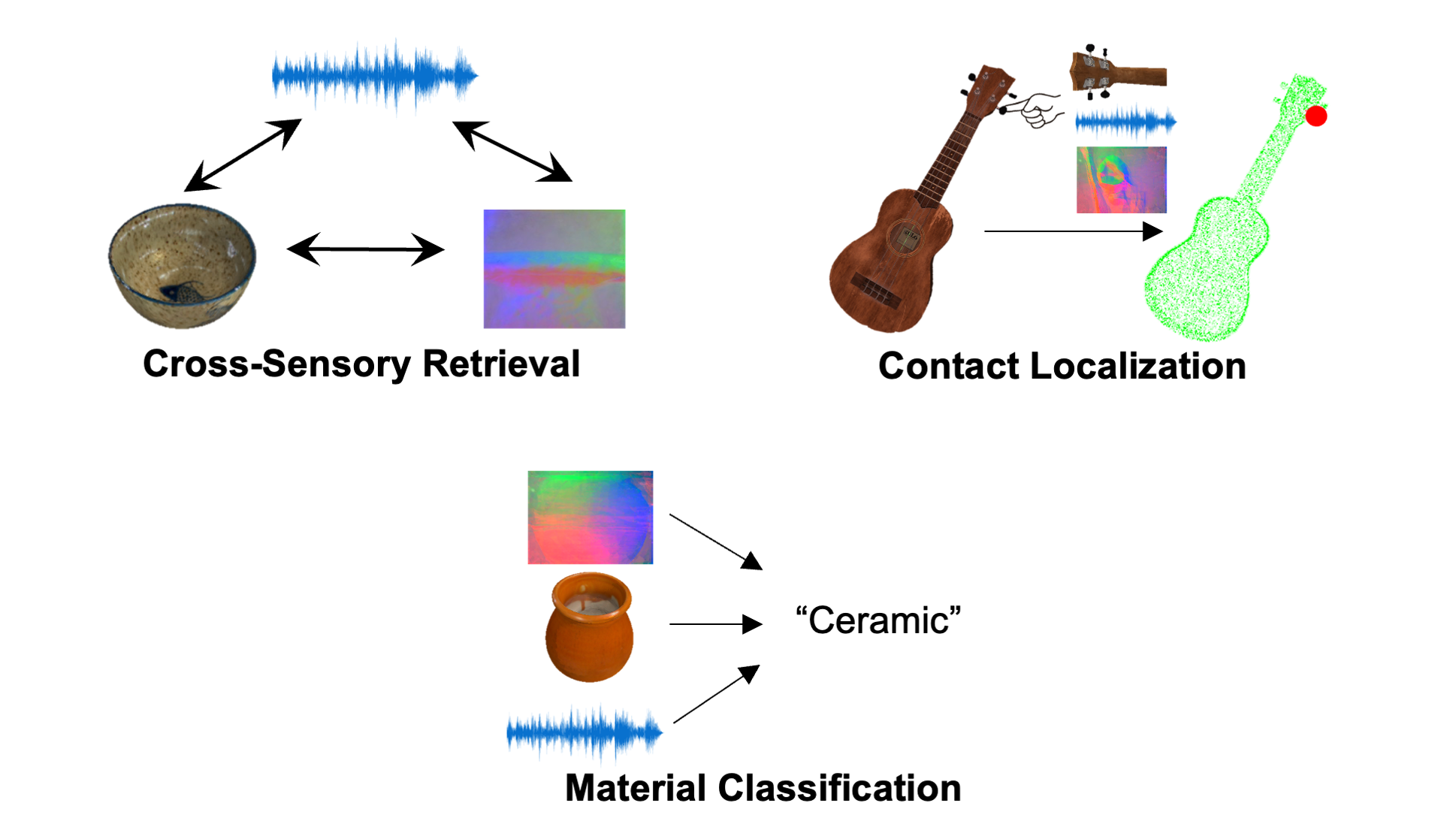

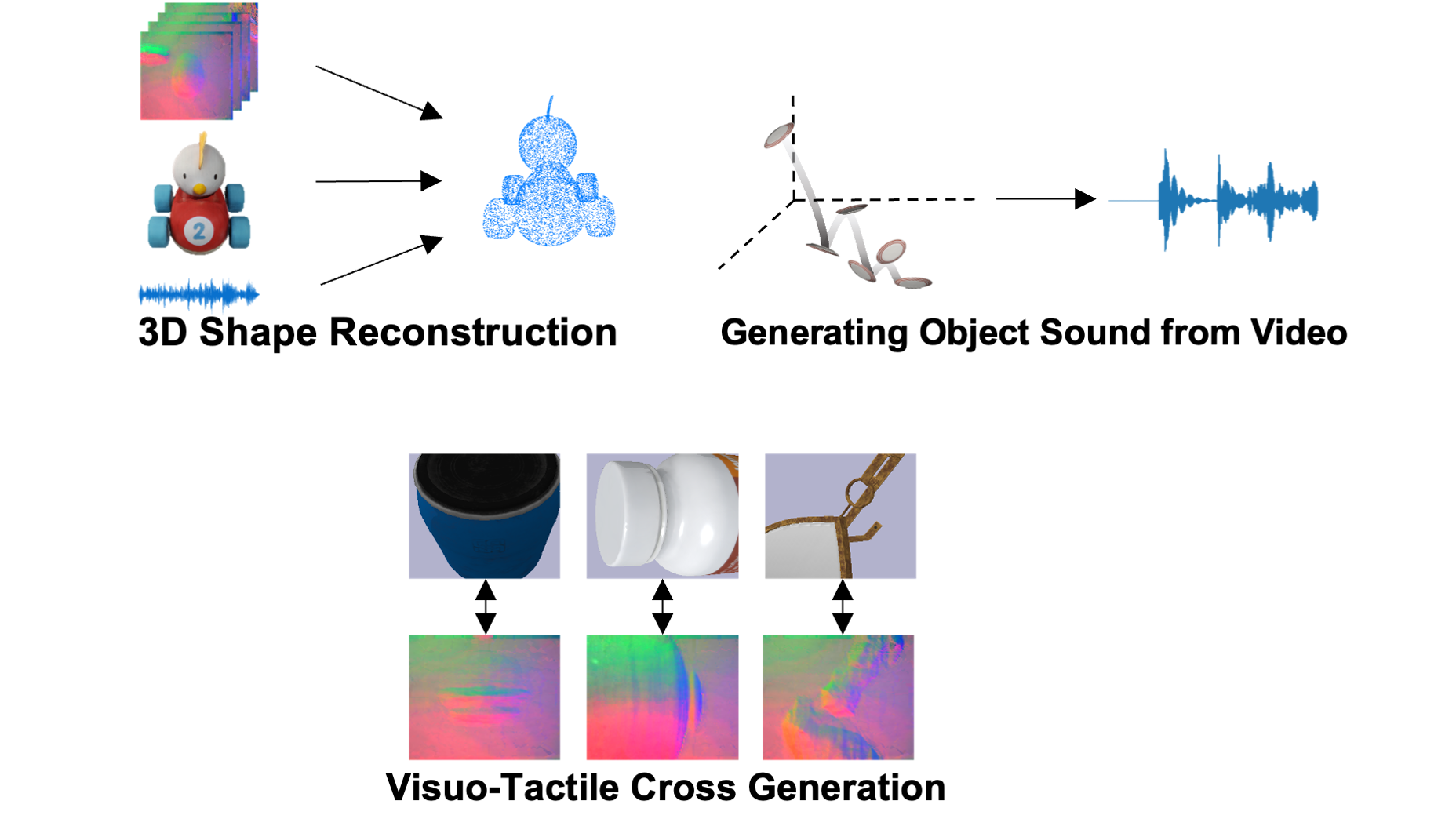

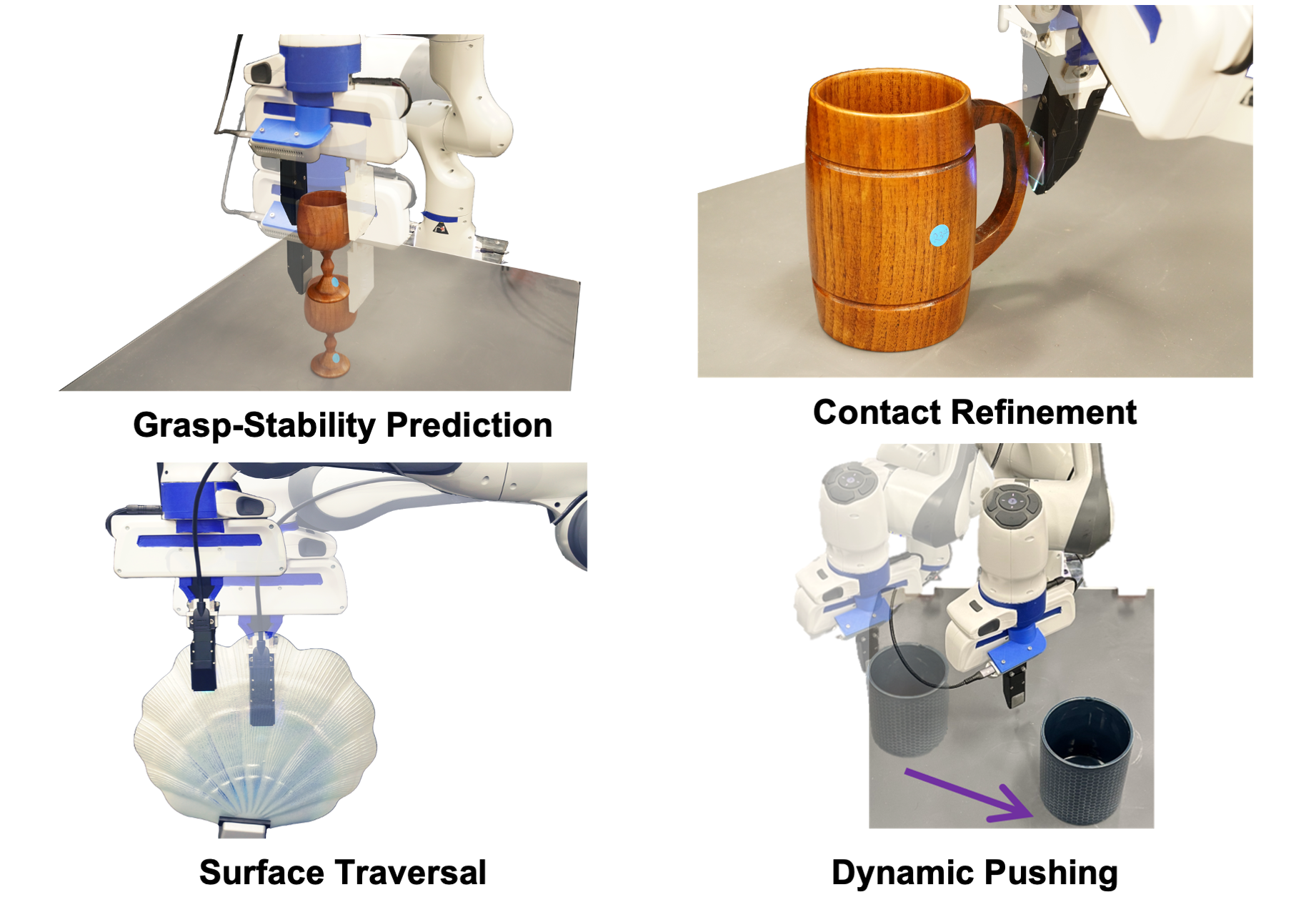

ObjectFolder models the multisensory behaviors of real objects with 1) ObjectFolder 2.0, a dataset of 1,000 neural objects in the form of implicit neural representations with simulated multisensory data, and 2) ObjectFolder Real, a dataset that contains the multisensory measurements for 100 real-world household objects, building upon a newly designed pipeline for collecting the 3D meshes, videos, impact sounds, and tactile readings of real-world objects. It also contains a standard benchmark suite of 10 tasks for multisensory object-centric learning, centered around object recognition, reconstruction, and manipulation with sight, sound, and touch. We open source both datasets and the benchmark suite to catalyze and enable new research in multisensory object-centric learning in computer vision, robotics, and beyond.

ObjectFolder Datasets

ObjectFolder-Real

ObjectFolder 2.0

ObjectFolder Benchmarks

Object Recognition

Object Reconstruction

Object Manipulation

Publications

-

The ObjectFolder Benchmark: Multisensory Learning with Neural and Real Objects

Ruohan Gao*, Yiming Dou*, Hao Li*, Tanmay Agarwal, Jeannette Bohg, Yunzhu Li, Li Fei-Fei and Jiajun Wu

In Conference on Computer Vision and Pattern Recognition (CVPR), 2023. [bibtex]

-

ObjectFolder 2.0: A Multisensory Object Dataset for Sim2Real Transfer

Ruohan Gao*, Zilin Si*, Yen-Yu Chang*, Samuel Clarke, Jeannette Bohg, Li Fei-Fei, Wenzhen Yuan and Jiajun Wu

In Conference on Computer Vision and Pattern Recognition (CVPR), 2022. [bibtex]

-

ObjectFolder: A Dataset of Objects with Implicit Visual, Auditory, and Tactile Representations

Ruohan Gao, Yen-Yu Chang*, Shivani Mall*, Li Fei-Fei and Jiajun Wu

In 5th Conference on Robot Learning (CoRL), 2021. [bibtex]